地球资源数据云——数据资源详情

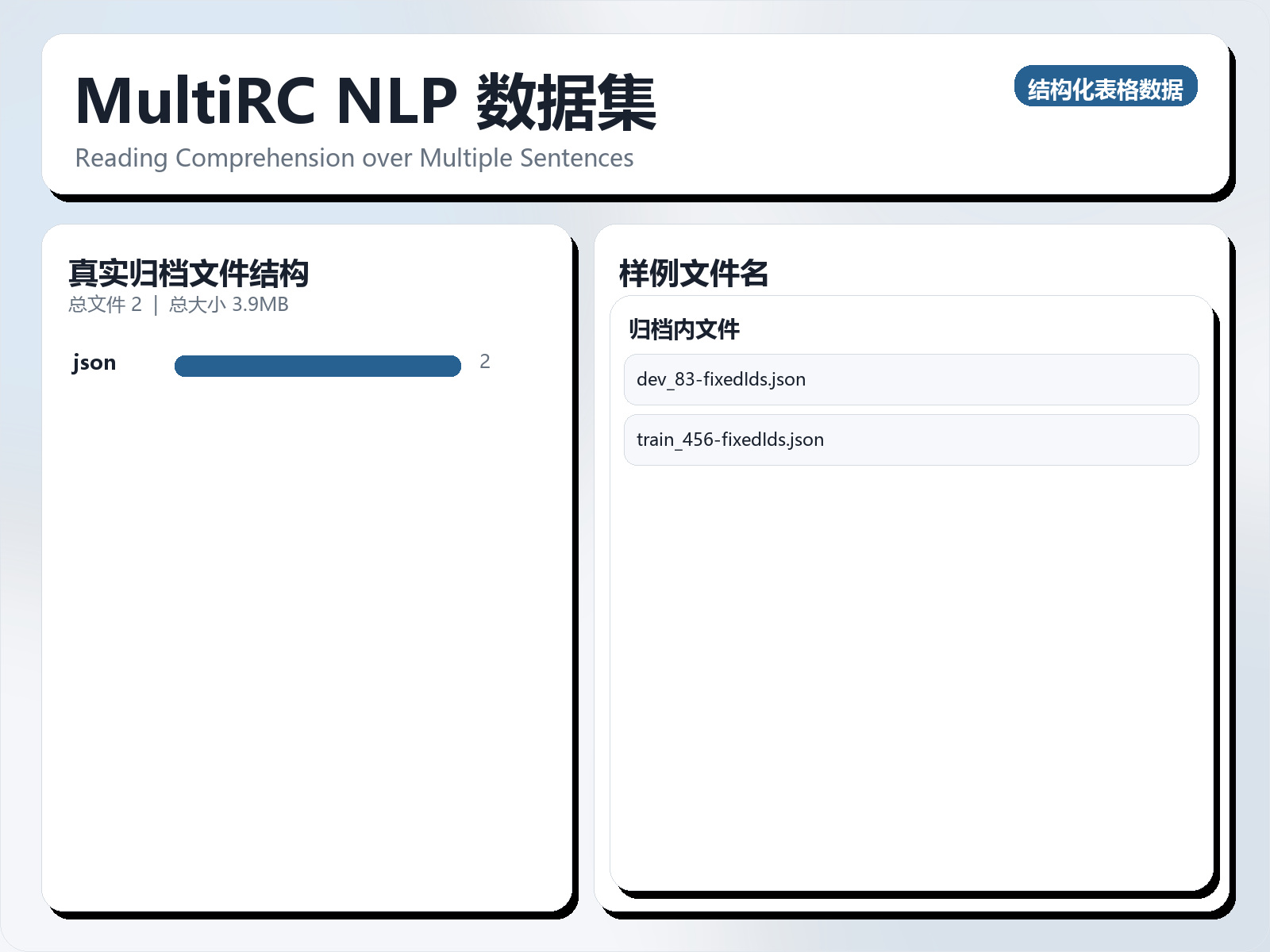

MultiRC NLP 数据集

该数据集《MultiRC NLP Dataset 》主要用于监督学习任务,数据形态以文本为主,应用场景偏向文本内容分析。 题目说明:Reading Comprehension over Multiple Sentences 任务类型:文本监督学习。 建议流程:先做文本清洗与分词,再比较 TF - IDF+线性模型 与 预训练语言模型。 评估建议:使用分层切分或交叉验证,优先关注 F1、Recall、AUC 等分类指标。 可用文件:未检测到标准 CSV,可优先查看目录中的索引或说明文件。 Now that I have your attention, please up - vote this dataset and read the following!!! Introduction MultiRC (Multi - Sentence Reading Comprehension) is a dataset of short paragraphs and multi - sentence questions that can be answered from the content of the paragraph. We have designed the dataset with three key challenges in mind: The number of correct answer - options for each question is not pre - specified. This removes the over - reliance of current approaches on answer - options and forces them to decide on the correctness of each candidate answer independently of others. In other words, unlike previous work, the task here is not to simply identify the best answer - option, but to evaluate the correctness of each answer - option individually. The correct answer(s) is not required to be a span in the text. The paragraphs in our dataset have diverse provenance by being extracted from 7 different domains such as news, fiction, historical text etc., and hence are expected to be more diverse in their contents as compared to single - domain datasets. The goal of this dataset is to encourage the research community to explore approaches that can do more than sophisticated lexical - level matching.

摘要概览

该数据集《MultiRC NLP Dataset 》主要用于监督学习任务,数据形态以文本为主,应用场景偏向文本内容分析。 题目说明:Reading Comprehension over Multiple Sentences

任务类型:文本监督学习。

建议流程:先做文本清洗与分词,再比较 TF - IDF+线性模型 与 预训练语言模型。

评估建议:使用分层切分或交叉验证,优先关注 F1、Recall、AUC 等分类指标。

可用文件:未检测到标准 CSV,可优先查看目录中的索引或说明文件。

Now that I have your attention, please up - vote this dataset and read the following!!!

Introduction MultiRC (Multi - Sentence Reading Comprehension) is a dataset of short paragraphs and multi - sentence questions that can be answered from the content of the paragraph. We have designed the dataset with three key challenges in mind: The number of correct answer - options for each question is not pre - specified.

This removes the over - reliance of current approaches on answer - options and forces them to decide on the correctness of each candidate answer independently of others. In other words, unlike previous work, the task here is not to simply identify the best answer - option, but to evaluate the correctness of each answer - option individually.

The correct answer(s) is not required to be a span in the text. The paragraphs in our dataset have diverse provenance by being extracted from 7 different domains such as news, fiction, historical text etc., and hence are expected to be more diverse in their contents as compared to single - domain datasets.

The goal of this dataset is to encourage the research community to explore approaches that can do more than sophisticated lexical - level matching.