地球资源数据云——数据资源详情

notMNIST字母图像分类数据集

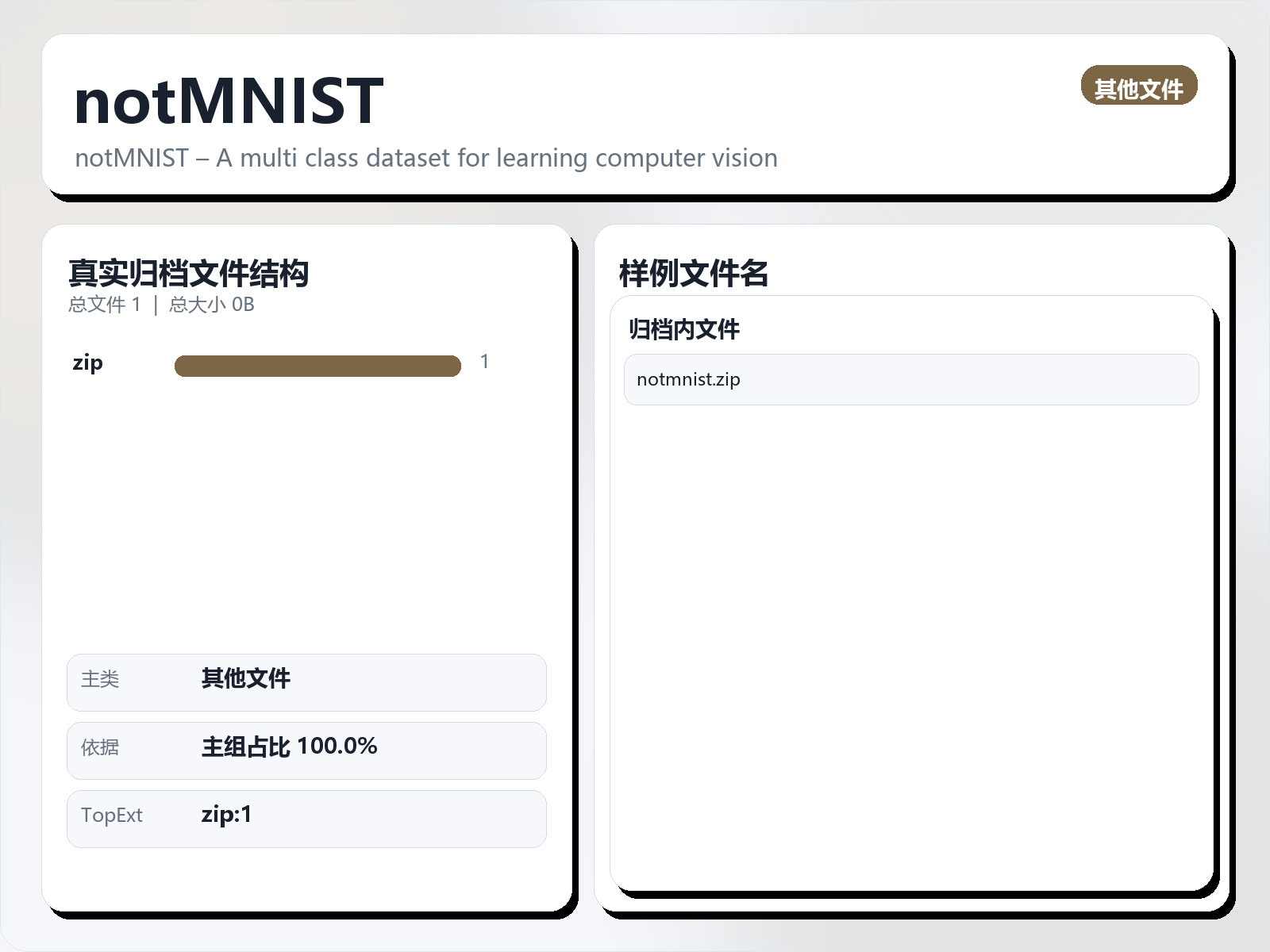

该数据集《notMNIST》主要用于多分类任务,数据形态以图像为主。 题目说明:notMNIST – A multi class dataset for learning computer vision 任务类型:图像多分类。 建议流程:先检查类别分布与脏样本,再用迁移学习(如 ResNet/EfficientNet)建立基线。 评估建议:使用分层切分或交叉验证,优先关注 F1、Recall、AUC 等分类指标。 可用文件:未检测到标准 CSV,可优先查看目录中的索引或说明文件。 Context notMNIST dataset is created from some publicly available fonts and extracted glyphs from them to make a dataset similar to MNIST. There are 10 classes, with letters A - J taken from different fonts. Judging by the examples, one would expect this to be a harder task than MNIST. This seems to be the case - - logistic regression on top of stacked auto - encoder with fine - tuning gets about 89% accuracy whereas same approach gives got 98% on MNIST. Dataset consists of small hand - cleaned part, about 19k instances, and large uncleaned dataset, 500k instances. Two parts have approximately 0.5% and 6.5% label error rate. Got this by looking through glyphs and counting how often my guess of the letter didn't match it's unicode value in the font file. This dataset is used extensively in the Udacity Deep Learning course, and is available in the Tensorflow Github repo (under Examples). I'm not aware of any license governing the use of this data, so I'm posting it here so that the community can use it with Kaggle kernels.

摘要概览

该数据集《notMNIST》主要用于多分类任务,数据形态以图像为主。 题目说明:notMNIST – A multi class dataset for learning computer vision

任务类型:图像多分类。

建议流程:先检查类别分布与脏样本,再用迁移学习(如 ResNet/EfficientNet)建立基线。

评估建议:使用分层切分或交叉验证,优先关注 F1、Recall、AUC 等分类指标。

可用文件:未检测到标准 CSV,可优先查看目录中的索引或说明文件。

Context

notMNIST dataset is created from some publicly available fonts and extracted glyphs from them to make a dataset similar to MNIST. There are 10 classes, with letters A - J taken from different fonts.

Judging by the examples, one would expect this to be a harder task than MNIST. This seems to be the case - - logistic regression on top of stacked auto - encoder with fine - tuning gets about 89% accuracy whereas same approach gives got 98% on MNIST. Dataset consists of small hand - cleaned part, about 19k instances, and large uncleaned dataset, 500k instances.

Two parts have approximately 0.5% and 6.5% label error rate. Got this by looking through glyphs and counting how often my guess of the letter didn't match it's unicode value in the font file.

This dataset is used extensively in the Udacity Deep Learning course, and is available in the Tensorflow Github repo (under Examples). I'm not aware of any license governing the use of this data, so I'm posting it here so that the community can use it with Kaggle kernels.